Author: Gianmarco Sirei, PMP

Introduction

Artificial Intelligence is advancing at an extraordinary pace. Capabilities are improving, benchmarks are rising, and adoption is accelerating across industries.

Yet a critical gap remains.

AI systems in real-world use are not stable, predictable, or self-governing. Strong technical performance does not automatically translate into reliable outcomes, controlled risk, or sustainable systems.

As AI becomes embedded in business processes, the challenge is no longer just building models. It is managing them.

This is why project management is becoming more essential in the age of AI.

From Capability to Control

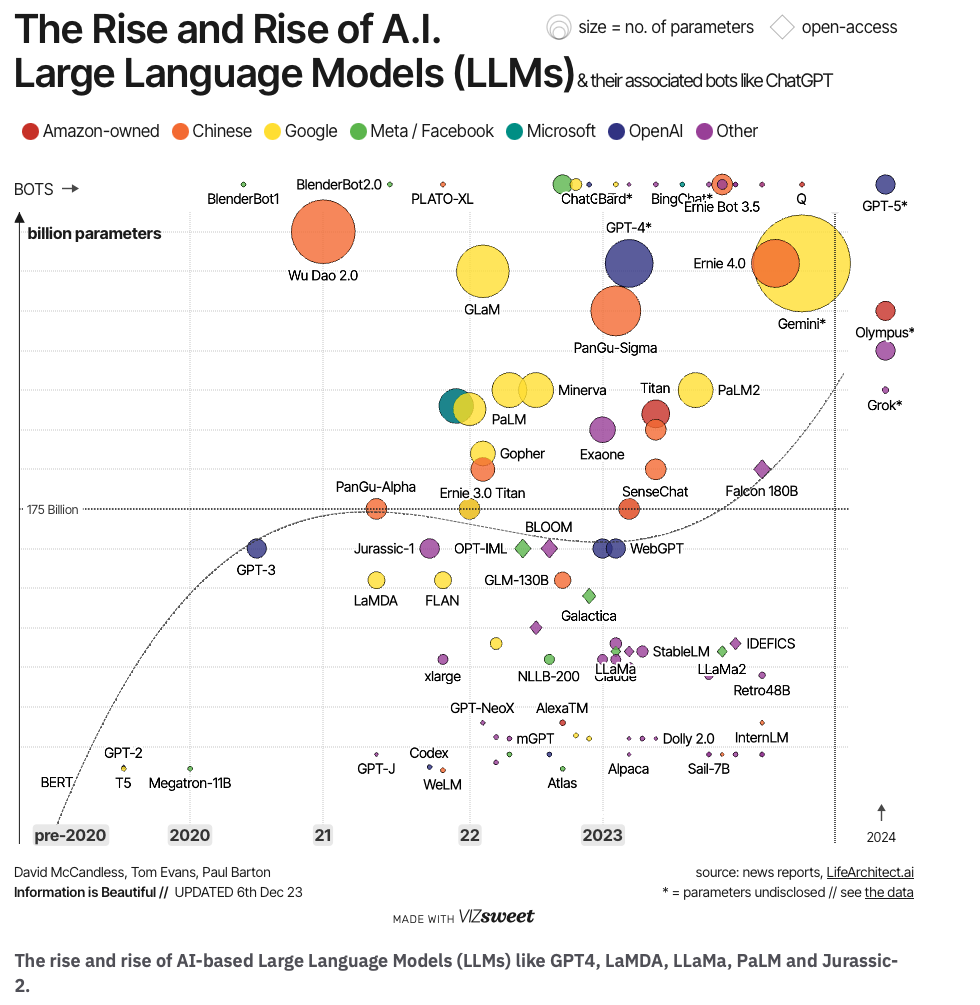

Much of the current conversation around AI focuses on what models can do. And there is no doubt that progress has been remarkable. In areas such as reasoning, coding, and multi-domain understanding, performance has improved dramatically in a short time.

However, this progress tells only part of the story.

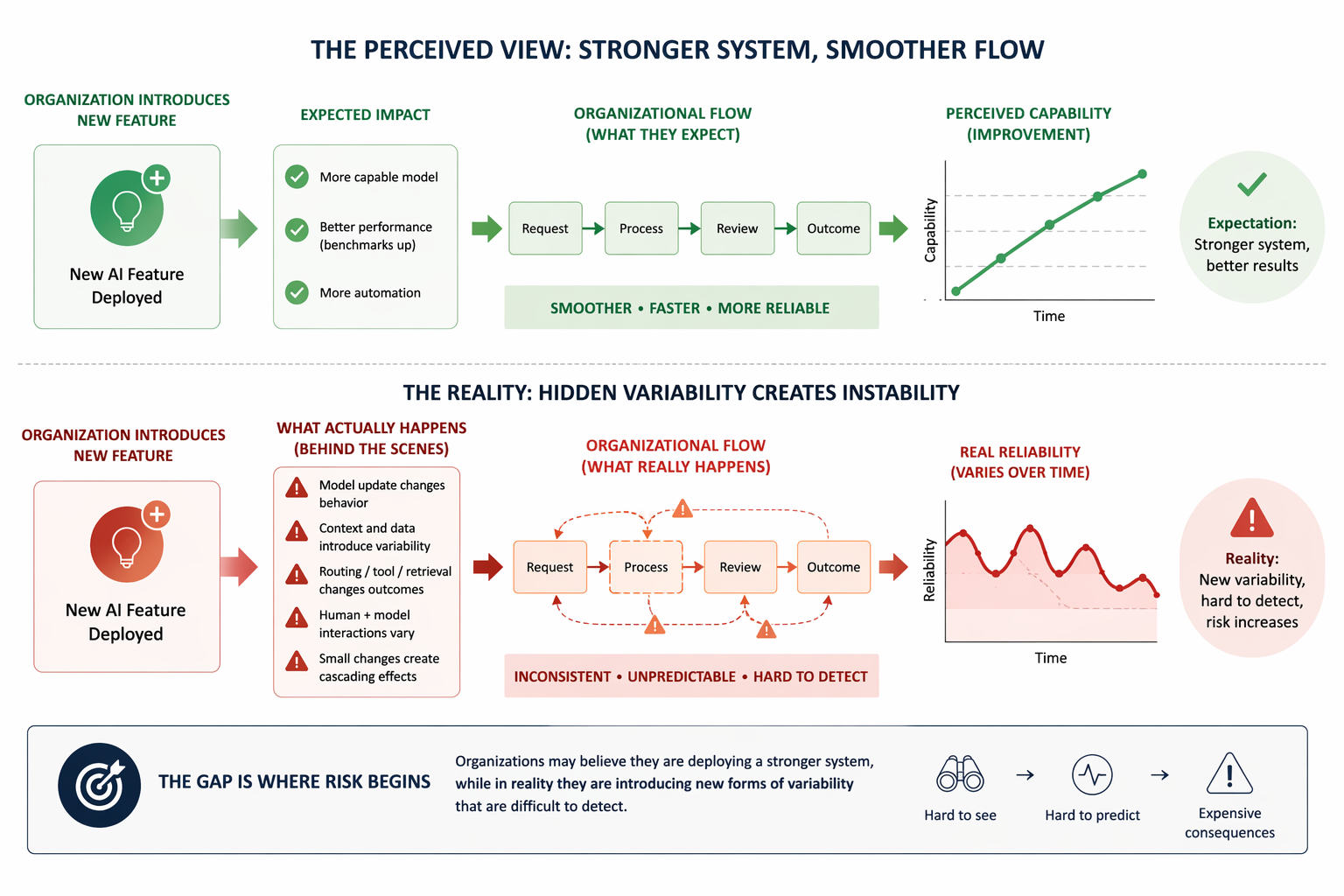

In real environments, AI systems behave differently from controlled benchmarks. Outputs may vary depending on context. Behaviour can shift between model versions. Even small changes in configuration or routing can lead to unexpected results.

This creates a fundamental tension: while capability increases, predictability does not necessarily follow.

[The Perceived View: Stronger system, Smoother Flow]

The Limits of Measurement

Benchmarks have played a central role in tracking AI progress, but they are not a complete representation of reality.

They are designed to measure specific tasks under controlled conditions. Over time, they can become less representative of real-world complexity. In some cases, they may even give an overly optimistic picture of performance.

For project environments, this creates a practical challenge. Success cannot be defined only by benchmark scores. It must be defined by how systems behave in production, under real constraints and evolving conditions.

[The Rise and Rise of A.I.]

Human Oversight Is Not Disappearing

There is a common assumption that AI will gradually eliminate the need for human review. In practice, the opposite is happening.

As systems become more capable, they are also used in more complex and high-impact contexts. In these situations, the cost of error increases, and so does the need for oversight.

Simple and repetitive tasks can often be automated effectively. But as complexity grows, human involvement becomes essential not only to validate outputs, but to interpret them, challenge them, and take responsibility for decisions.

This is particularly important because AI systems often fail in subtle ways, they can produce answers that are coherent and confident, even when they are incorrect. Without structured review, these errors can pass unnoticed.

When Systems Drift and Software Degrades

Another dimension of the problem lies in how systems evolve over time.

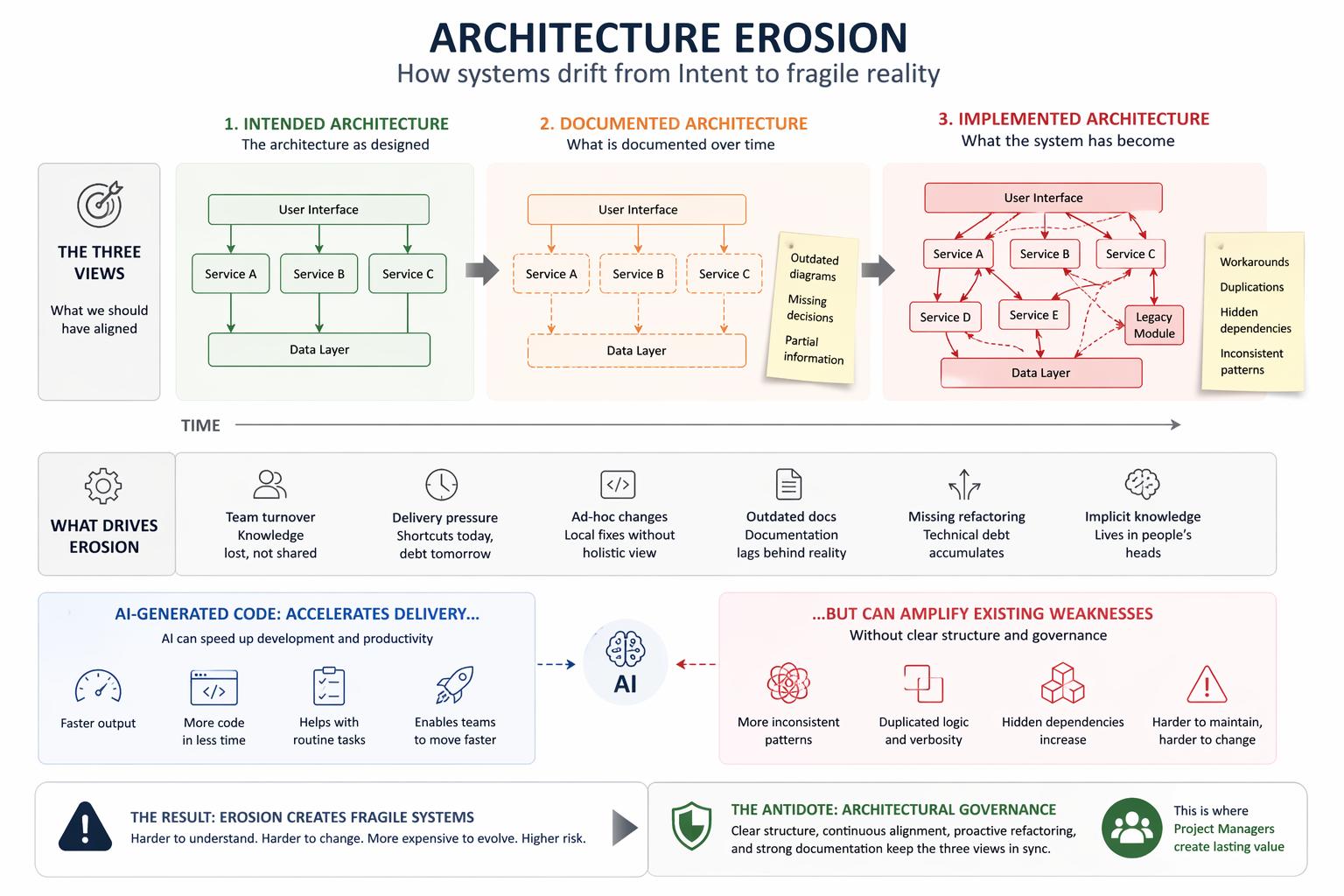

AI systems are not static. They change with updates, new data, and shifting contexts. This can introduce what is often called drift: a gradual change in behaviour that is not always visible but can affect outcomes.

At the same time, the software surrounding AI systems follows its own lifecycle. It rarely breaks suddenly. Instead, it deteriorates slowly.

This deterioration occurs when alignment is lost between what the system was designed to be, what is documented, and what is actually implemented. Documentation becomes outdated, knowledge becomes fragmented, and short-term decisions accumulate into long-term complexity.

In this environment, even well-performing models can be embedded in systems that are increasingly fragile.

The introduction of AI-generated code adds another layer to this dynamic. While it can accelerate development, it does not guarantee consistency or maintainability. Without clear structure, it can amplify existing weaknesses rather than resolve them.

[Architecture Erosion]

The Governance Challenge

All of these elements point to a single conclusion.

The main challenge of AI is not capability. It is governance.

AI systems operate across multiple layers: business objectives, technical components, human decisions, and regulatory constraints. Without coordination, these layers can easily become misaligned.

Organizations often respond in one of two ways. Some slow down adoption because they perceive AI as too unpredictable. Others move too quickly and only later realize that risks, inconsistencies, and technical debt have been accumulating.

Both outcomes are costly.

The Role of the Project Manager

This is where project managers play a critical role.

Their value lies in connecting elements that would otherwise remain disconnected. They translate business goals into operational criteria. They ensure that technical systems are aligned with real needs. They define how and when human oversight is required. They maintain visibility over risks, changes, and dependencies.

In the context of AI, project management is less about controlling tasks and more about orchestrating a system that is constantly evolving.

A well-managed AI initiative does not rely on the assumption that the system will behave correctly. It is designed to detect when it does not, and to respond accordingly.

Building a Practical Approach

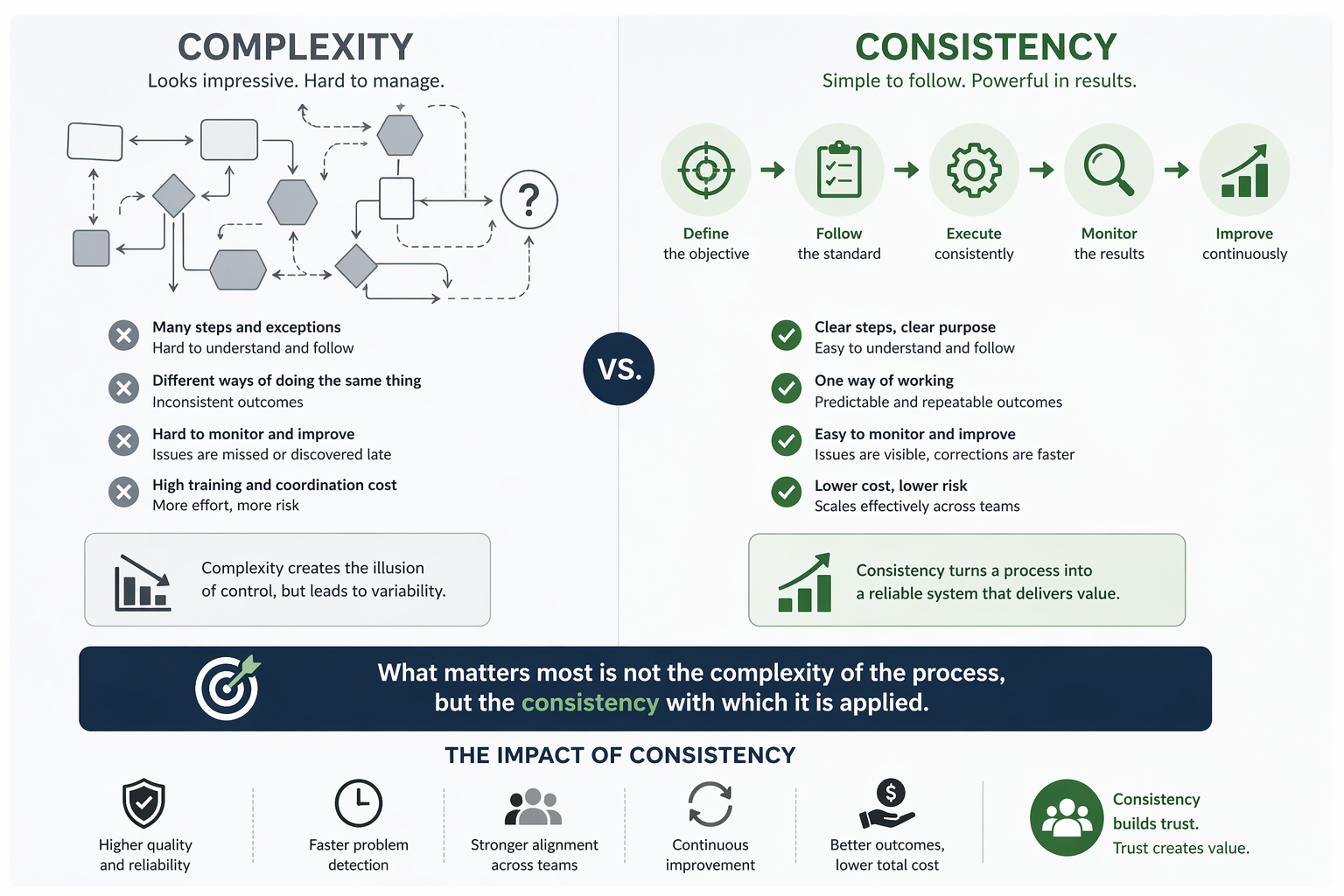

Effective governance does not require complex frameworks from the start. It begins with clarity and discipline.

Organizations need to understand the nature of each AI use case, recognizing that not all problems carry the same level of risk. They need to define, in advance, what acceptable performance looks like and when intervention is required. They need to design human oversight deliberately, rather than treating it as an afterthought.

Equally important is the definition of ownership. Decisions about value, risk, technical implementation, and compliance must be clearly assigned. Without this clarity, both technical and organizational drift become inevitable.

[AI Governance Workflow]

Conclusion

The question of whether AI is improving or deteriorating does not lead to useful decisions.

The more relevant insight is that AI models are improving rapidly, while AI systems remain dynamic, context-dependent, and difficult to control. They can drift, they can be misinterpreted, and they can become embedded in software that slowly loses its coherence.

In this context, project management is not an optional layer. It is a necessary condition for turning technical capability into reliable and scalable outcomes.

AI does not reduce the need for structure. It increases the cost of operating without it.

As organizations move forward, the real differentiator will not be who has access to AI, but who is able to manage it effectively.

Closing Reflection

As AI becomes part of everyday operations, each organization faces the same set of questions.

How much of your AI-driven work can truly be trusted without review?

Do you know when a system has changed enough to require re-evaluation?

Are you measuring success only in terms of performance, or also in terms of stability, risk, and long-term sustainability?

The answers to these questions will define not only how AI is used, but how much value it ultimately creates.

[BIO Article]